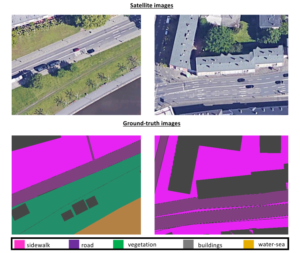

This satellite dataset consists of overhead imagery from Google Maps (using the Google Maps Downloader4), which are sufficiently orthorectified and georeferenced for the purposes of our application. Note that, the highest GSD resolution allowed from the service along the x and y axes was computed at Sx = 8cm/pixel and Sy = 7cm/pixel, respectively. Specifically, a set of 360 × 480 × 3 images is created, in order to feed a neural network (such as SegNet).

This satellite dataset consists of overhead imagery from Google Maps (using the Google Maps Downloader4), which are sufficiently orthorectified and georeferenced for the purposes of our application. Note that, the highest GSD resolution allowed from the service along the x and y axes was computed at Sx = 8cm/pixel and Sy = 7cm/pixel, respectively. Specifically, a set of 360 × 480 × 3 images is created, in order to feed a neural network (such as SegNet).

We propose the division of the available data into three subsets for training, validation and testing, containing 60-20-20 percent of the available samples, respectively.

Finally, we manually annotated all samples with respect to six semantic labels, namely, buildings, sidewalks, roads, water, vegetation and terrain, creating the required ground-truth for each frame.

4 http://allmapsoft.com/gmd/